Containerizing network functions marked the first step toward bringing agility and accelerating transformation in the networking domain. But it’s the orchestration of those containers with Kubernetes that has truly shifted the momentum — enabling the convergence of cloud-native workloads and AI, and driving the next wave of innovations in telecom and networking.

Kubernetes is not just an IT tool — it’s the strategic platform giving telecom operators hyperscaler-like speed, flexibility, and innovation capacity. It’s the backbone for moving from connectivity providers to digital service and AI-powered platform providers.

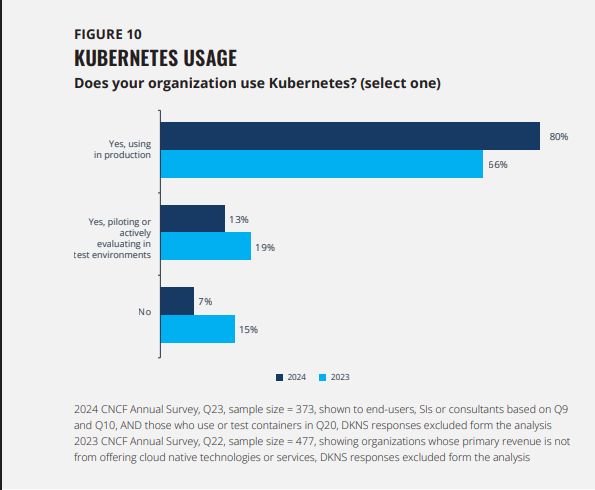

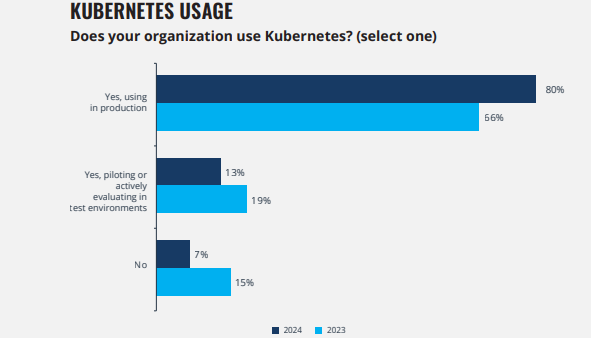

According to CNCF’s annual survey, 93% of companies are either running Kubernetes in production, piloting it, or actively evaluating it. For telcos, the drivers are clear: accelerated 5G deployment, edge and virtualization needs, demand for agility, operational efficiency, and the move away from legacy systems.

Here are three key benefits that stand out for telecom leaders:

Agility & Speed to Market

With Kubernetes, operators adopt software-like delivery models (CI/CD, GitOps, declarative automation). This reduces service rollout times from months to days, enabling telcos to compete with hyperscalers and launch enterprise/vertical solutions faster.

Edge & AI Enablement

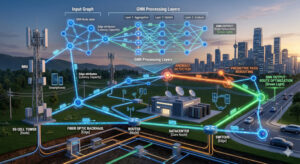

Kubernetes scales seamlessly from core to edge, hosting ultra-low-latency apps, network slicing, and AI/ML pipelines. This underpins new revenue streams — from smart manufacturing to immersive media, connected mobility, and AI-driven customer experience.

Operational Efficiency & Resilience

Kubernetes brings self-healing, automated scaling, and observability, lowering OPEX while increasing reliability. Combined with eBPF, service mesh, and AI-driven orchestration, it provides true carrier-grade performance while reducing operational complexity.

What’s next?

CNCF and the ecosystem are continuously evolving Kubernetes for next-generation workloads. AI, in particular, is pushing the boundaries of network infrastructure. Kubernetes is fast becoming the platform of choice to run AI/ML workloads across clusters of GPUs.

But AI needs more than local GPUs — it requires remote GPUs via RDMA (Remote Direct Memory Access) for efficiency. That’s where new initiatives like Google’s Kubernetes Network Drivers (KND) project come in, proposing the use of Dynamic Resource Allocation (DRA) to request access to specialized hardware for networking. It’s early-stage and experimental, but shows how Kubernetes is being reshaped for its second decade.

Some exciting stuff is coming — and I’ll cover more in future posts.

Be First to Comment