NVIDIA is pushing hard to break into the telecom market, aiming to monopolize AI chips all the way to the edge of the network. The recent announcement of the “AI Grid” at GTC is clear evidence of this ambition. However, to me, it feels like a desperate attempt to convince telecom operators that they need NVIDIA chips in every corner of their infrastructure.

How? Let’s dive in.

From AI-RAN to the “AI Grid”

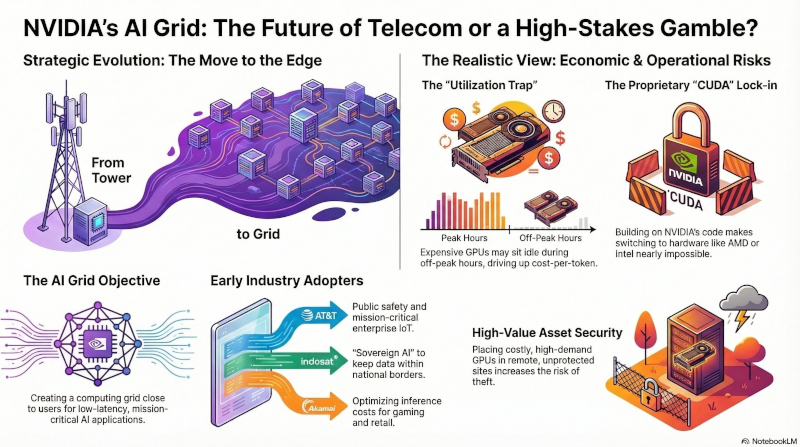

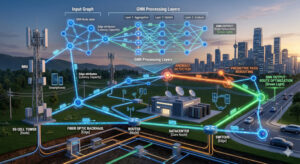

Last week at GTC (GPU Technology Conference), NVIDIA announced collaborations with six major operators to propose an architecture called AI Grids. These are distributed AI infrastructure deployments designed to leverage any network node—whether it’s part of a fixed, wireless, or content delivery network. NVIDIA is branding this as the “next frontier” for AI distribution.

To understand where we are, we have to look at where NVIDIA started. For a while, they’ve been pushing AI-RAN (Artificial Intelligence Radio Access Network). The idea was to embed powerful GPUs directly into cell towers to manage mobile traffic and AI tasks simultaneously.

But telcos haven’t bitten. Adoption has been slow, and interest has been lukewarm. Now, NVIDIA is pivoting to a new buzzword: “AI Grid.”

Instead of trying to put chips in millions of individual cell towers, NVIDIA now suggests placing them in roughly 100,000 “distributed data centers” or repurposed phone exchanges. The goal is to create a computing “grid” that is close to the user, but not necessarily physically bolted to the tower.

Two Sides of the Coin

There are two ways to look at this architecture:

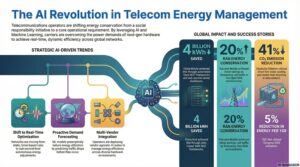

1. The Optimistic View: A Revenue Engine One could argue the AI Grid is a masterclass in vertical integration. It essentially turns the world’s cellular infrastructure into a distributed extension of NVIDIA’s own data centers. For telcos, this offers a path to monetize their networks by revamping their architecture with NVIDIA chips, enabling low-latency use cases like URLLC (Ultra-Reliable Low-Latency Communications).

2. The Realistic View: Economic and Security Risks The other side of the story involves brutal economics and significant risks:

- The “Thief” Factor: High-end GPUs are incredibly expensive and in high demand. Industry experts (like Orange’s Bruno Zerbib) have raised concerns that placing these valuable assets in remote, unprotected sites makes them prime targets for theft.

- The “Subscription” Trap: These chips aren’t just expensive to buy; they often come with a “subscription game.” Telcos risk getting stuck paying NVIDIA indefinitely for software updates just to keep their networks efficient.

- Vendor Lock-in: Companies like Ericsson worry that building a network on NVIDIA’s specific code creates a trap. Once your software is inseparable from NVIDIA’s proprietary CUDA platform, switching to another brand becomes nearly impossible.

- The Utilization Trap: Unlike centralized hyperscalers (Google, AWS), telcos face a “utilization trap.” Expensive GPUs might sit idle in remote towers during off-peak hours, making the cost-per-token significantly higher than traditional batch processing.

Perhaps most importantly, as Orange ’s CTO Bruno Zerbib pointed out in conversation with LightReading, computing doesn’t need to be at the tower. As long as it’s within 20 to 100 kilometers, the latency is more than sufficient. Putting AI chips in every neighborhood simply doesn’t make sense.

Who’s Already On Board?

Despite these concerns, six major providers are already piloting the AI Grid. According to NVIDIA, here is how they are using it:

- AT&T: Partnering with Cisco and NVIDIA for IoT-focused AI grids, enabling real-time public safety and mission-critical enterprise apps.

- T-Mobile: Using the latest Blackwell GPUs to explore edge AI for smart cities, industrial, and retail applications, and delivery robots.

- Comcast: Turning its broadband footprint into an AI grid for hyper-personalized experiences like conversational AI and cloud gaming.

- Charter/Spectrum: Leveraging 1,000+ edge data centers for high-res graphics rendering with ultra-low latency.

- Indosat Ooredoo Hutchison: Building a “Sovereign AI” grid in Indonesia to keep data within national borders while running localized AI services like Sahabat-AI.

- Akamai: Expanding its grid across 4,400 edge locations to optimize AI inference costs for gaming and retail.

My Take

NVIDIA is an undisputed giant in the AI world—there’s no doubt about that. But the telecom industry moves with caution. Operators are skeptical about the massive costs of integrating AI at every layer, especially while they are still struggling with the high costs of 5G Standalone (SA) deployment.

Most telcos would prefer to keep AI chips in central data centers (the “Core”) rather than out at the “Edge.” Furthermore, while NVIDIA is dominant today, competitors like Intel, AMD, and Arm are fighting for this space, promising similar benefits without the proprietary “lock-in” drawbacks.

Ultimately, telcos want open architecture and COTS (Commercial Off-The-Shelf) components that allow for vendor diversity and cost control. As a result, it is difficult to convince the AI Grid will become the industry standard, despite these high-profile partnerships. Managing that many high-value chips across a distributed network simply adds too much risk for most operators to handle.

What do you think? Is the AI Grid the future of telecom, or just another way for NVIDIA to lock operators into their ecosystem?

Be First to Comment